AI Futures Timelines and Takeoff Model: Dec 2025 Update

webAuthors

Credibility Rating

Good quality. Reputable source with community review or editorial standards, but less rigorous than peer-reviewed venues.

Rating inherited from publication venue: LessWrong

A LessWrong post providing a quantitative update to an AI timelines and takeoff model, useful for those tracking capability forecasts and debating the pace of transformative AI development as of late 2025.

Forum Post Details

Metadata

Summary

A December 2025 update to a quantitative model forecasting AI capability milestones, including full coding automation and ASI. Compared to prior estimates, the model extends timelines to full coding automation by approximately 3-5 years due to more conservative assumptions about AI R&D self-acceleration. The authors advocate for transparent, explicit modeling as a tool for reasoning about AI development trajectories.

Key Points

- •Updated model predicts full coding automation 3-5 years later than previous 2027 estimates, driven by conservative AI R&D speedup assumptions.

- •Key milestones tracked include full coding automation and artificial superintelligence (ASI), with explicit probabilistic forecasts.

- •The model blends empirical data with intuitive parameter estimates, and authors are transparent about this hybrid methodology.

- •Explicit quantitative modeling is argued to be more useful than informal forecasting for preparing for transformative AI outcomes.

- •More conservative pre-automation R&D speedup assumptions are the primary driver of timeline revisions.

Cited by 1 page

| Page | Type | Quality |

|---|---|---|

| AI Timelines | Concept | 95.0 |

Cached Content Preview

# AI Futures Timelines and Takeoff Model: Dec 2025 Update

By elifland, bhalstead, Alex Kastner, Daniel Kokotajlo

Published: 2025-12-31

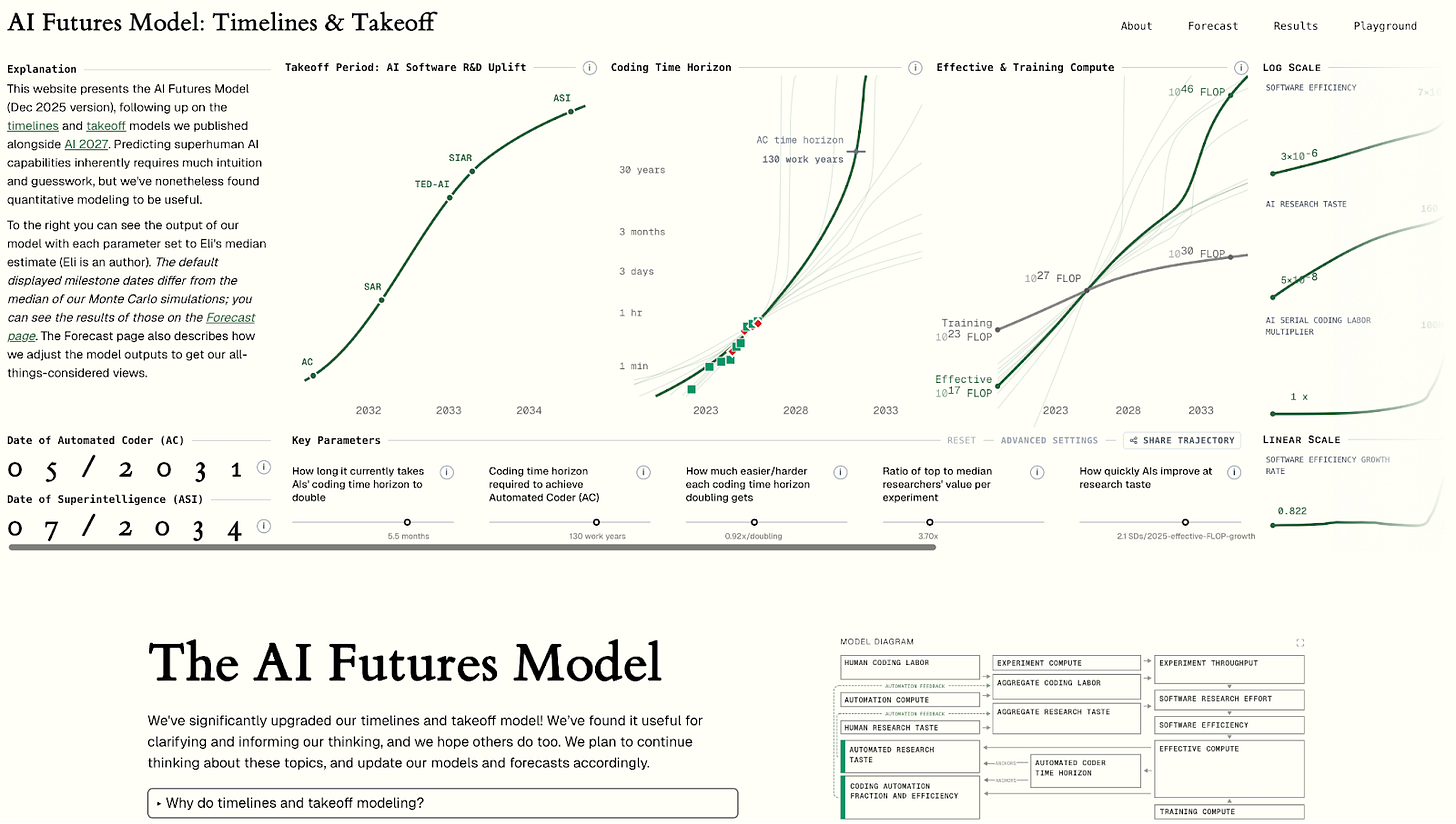

We’ve significantly upgraded our timelines and takeoff model! It predicts when AIs will reach key capability milestones: for example, Automated Coder / AC (full automation of coding) and superintelligence / ASI (much better than the best humans at virtually all cognitive tasks). This post will briefly explain how the model works, present our timelines and takeoff forecasts, and compare it to our previous ([AI 2027](https://ai-2027.com/research)) models (spoiler: the AI Futures Model predicts longer timelines to full coding automation than our previous model by about 3-5 years, in significant part due to being less bullish on pre-full-automation AI R&D speedups). *Added Jan 2026: see* [*here*](https://www.lesswrong.com/posts/qPco9BX5kmKCDzzW9/clarifying-how-our-ai-timelines-forecasts-have-changed-since) *for clarifications regarding how our forecasts have changed since AI 2027.*

If you’re interested in playing with the model yourself, the best way to do so is via this interactive website: [**aifuturesmodel.com**](http://aifuturesmodel.com).

*If you’d like to skip the motivation for our model to an explanation for how it works, go* [*here*](#How_our_model_works)*, The website has a more in-depth explanation of the model (starts* [*here*](https://www.aifuturesmodel.com/#section-timehorizonandtheautomatedcodermilestone)*; use the diagram on the right as a table of contents), as well as* [*our forecasts*](https://www.aifuturesmodel.com/forecast)*.*

Why do timelines and takeoff modeling?

======================================

The future is very hard to predict. We don't think this model, or any other model, should be trusted completely. The model takes into account what we think are the most important dynamics and factors, but it doesn't take into account everything. Also, only some of the parameter values in the model are grounded in empirical data; the rest are intuitive guesses. If you disagree with our guesses, you can change them above.

Nevertheless, we think that modeling work is important. Our overall view is the result of weighing many considerations, factors, arguments, etc.; a model is a way to do this transparently and explicitly, as opposed to implicitly and all in our head. By reading about our model, you can come to understand why we have the views we do, what arguments and trends seem most important to us, etc.

The future is uncertain, but we shouldn’t just wait for it to arrive. If we try to predict what will happen, if we pay attention to the trends and extrapolate them, if we build models of the underlying dynamics, then we'll have a better sense of what is likely, and we'

... (truncated, 53 KB total)a1c863df9c0fa30c | Stable ID: sid_Z54rimiOyK