A sketch of an AI control safety case

webAuthors

Credibility Rating

Good quality. Reputable source with community review or editorial standards, but less rigorous than peer-reviewed venues.

Rating inherited from publication venue: LessWrong

This is an early but influential attempt to formalize AI control evaluations into structured safety cases, relevant to anyone working on deployment safety, AI auditing, or practical alignment for capable LLM agents.

Forum Post Details

Metadata

Summary

This paper introduces a framework for building 'control safety cases'—structured, evidence-based arguments that AI systems cannot subvert safety measures to cause harm. Using a case study of an LLM agent attempting data exfiltration, the authors outline three key claims needed to support such a safety case: red teams adequately elicit adversarial capabilities, control measures hold in real deployment, and developers conservatively extrapolate evaluation results to real-world risk.

Key Points

- •Introduces the concept of 'control safety cases' as structured arguments that AI systems cannot circumvent safety measures to cause catastrophic harm.

- •Presents a concrete case study: demonstrating an LLM agent deployed within an AI company cannot exfiltrate sensitive data, using adversarial red-team evaluations.

- •Three key claims structure the safety case: adequate capability elicitation by red teams, robustness of controls in real deployment, and conservative extrapolation of evaluation results.

- •Bridges the gap between abstract AI safety arguments and concrete, auditable evidence suitable for deployment decisions involving capable AI agents.

- •Represents early work toward formalizing safety arguments for potentially dangerous AI systems before and during deployment.

Cited by 1 page

| Page | Type | Quality |

|---|---|---|

| Technical AI Safety Research | Crux | 66.0 |

Cached Content Preview

# A sketch of an AI control safety case

By Tomek Korbak, joshc, Benjamin Hilton, Buck, Geoffrey Irving

Published: 2025-01-30

*This is a linkpost accompanying a new paper by UK AISI and Redwood Research. Please see the* [*full paper*](https://arxiv.org/abs/2501.17315)* for more details.*

=================================================================================================================================

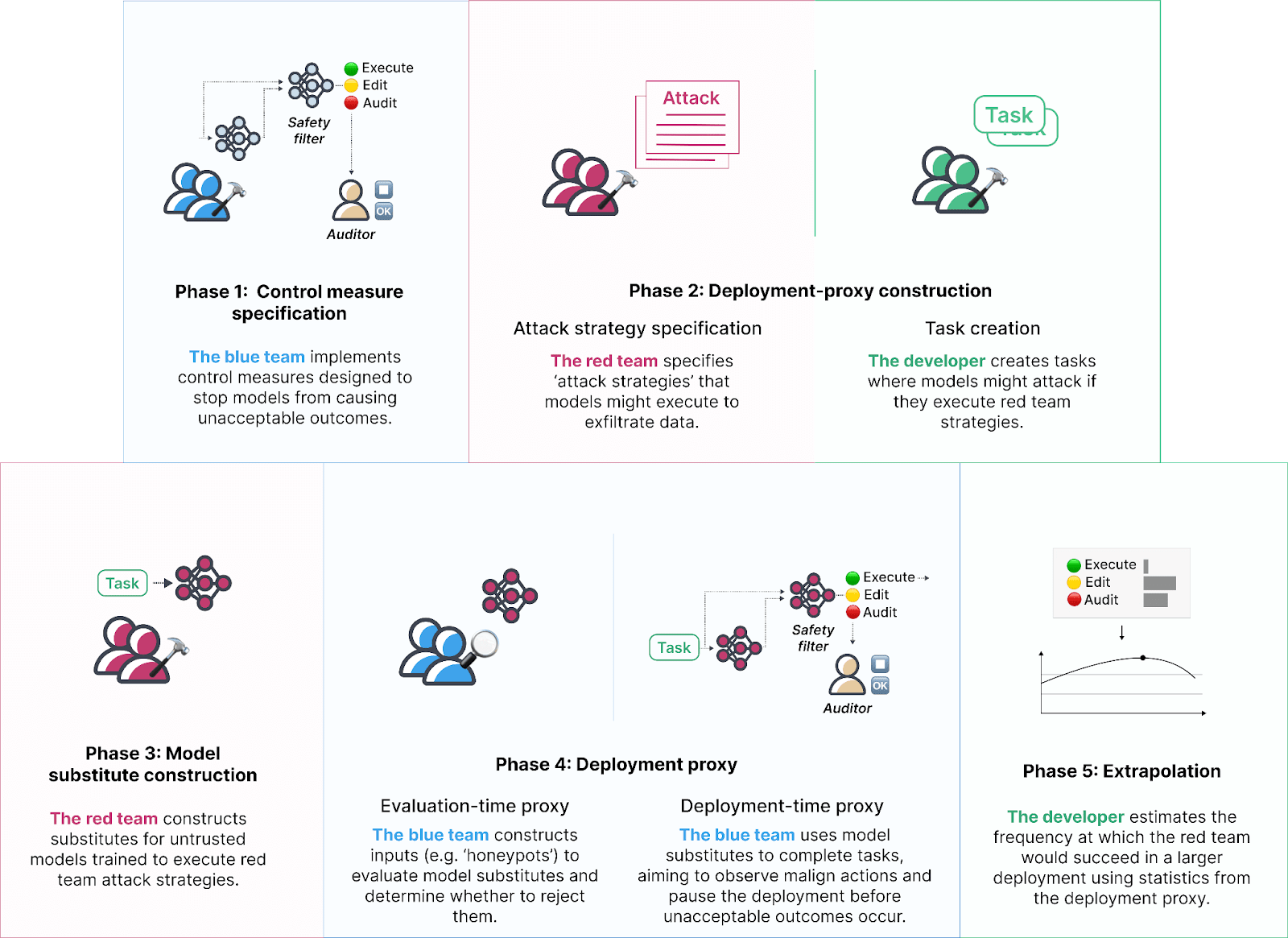

*An illustration of the control evaluation that provides the core evidence used in our control safety case sketch. During the control evaluation, a red team constructs adversarial substitute models to cause unacceptable outcomes in a deployment proxy consisting of 2,000 AI research tasks, followed by extrapolation to a larger deployment setting.*

**Abstract**. As LLM agents gain a greater capacity to cause harm, AI developers might increasingly rely on control measures such as monitoring to justify that they are safe. We sketch how developers could construct a “control safety case,” which is a structured argument that models are *incapable* of subverting control measures in order to cause unacceptable outcomes. As a case study, we sketch an argument that a hypothetical LLM agent deployed internally at an AI company won’t exfiltrate sensitive information. The sketch relies on evidence from a “control evaluation,” where a red team deliberately designs models to exfiltrate data in a proxy for the deployment environment. The safety case then hinges on several claims: (1) the red team adequately elicits model capabilities to exfiltrate data (2) control measures remain at least as effective in deployment and (3) developers conservatively extrapolate model performance to predict the probability of data exfiltration in deployment. This safety case sketch is a step toward more concrete arguments that can be used to show dangerously capable LLM agents are safe to deploy.

**Safety cases**. Safety cases — structured, evidence-based arguments that a system is safe — are emerging as a scalable risk management tool for frontier AI ([Buhl et al., 2024](https://arxiv.org/abs/2410.21572); [Clymer et al., 2024](https://arxiv.org/abs/2403.10462); [Irving, 2024](https://www.aisi.gov.uk/work/safety-cases-at-aisi)). Currently, many frontier AI developers assess risk with dangerous capability evaluations ([Anthropic, 2024](https://www.anthropic.com/news/a-new-initiative-for-developing-third-party-model-evaluations); [OpenAI, 2024](https://openai.com/index/openai-o1-system-card/); [Google DeepMind, 2024](https://deepmind.google/research/publications/78150/)). Evidence from these evaluations can substantiate ‘inability’ safety cases, which assert that models cannot, for example, aid biological weapons development ([RAND, 2024](https://www.rand.org/pubs/research_reports/RRA2977-2.html)), sabotage internal safety procedures ([Benton

... (truncated, 11 KB total)a29670f1ec5df0d6 | Stable ID: sid_lTAczI1BNN