Anthropic's Responsible Scaling Policy & Long-Term Benefit Trust

webAuthor

Credibility Rating

Good quality. Reputable source with community review or editorial standards, but less rigorous than peer-reviewed venues.

Rating inherited from publication venue: LessWrong

This LessWrong post summarizes Anthropic's RSP, a significant industry governance document establishing concrete safety commitments tied to capability thresholds; relevant for researchers studying AI policy, deployment standards, and institutional approaches to catastrophic risk management.

Forum Post Details

Metadata

Summary

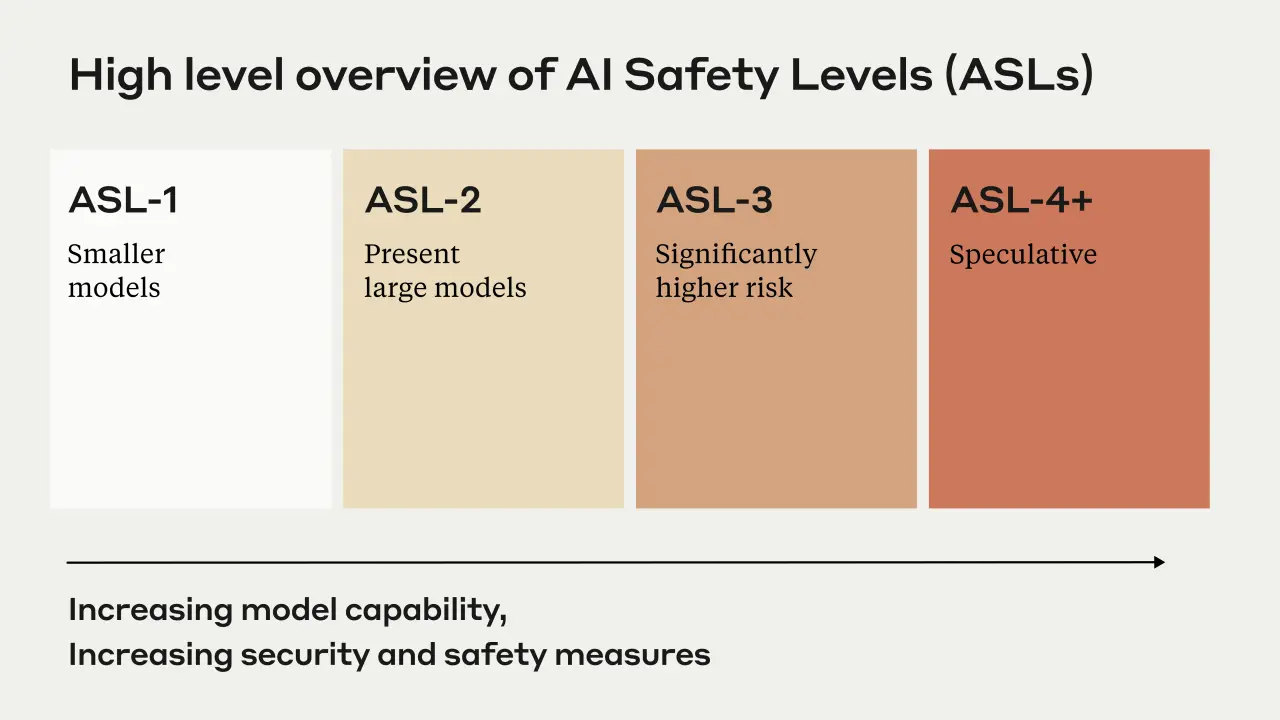

Anthropic's Responsible Scaling Policy introduces AI Safety Levels (ASL), a tiered framework modeled on biosafety standards that mandates escalating safety and security requirements as AI capabilities increase. Current models like Claude are classified ASL-2, while higher levels trigger stricter red-teaming, interpretability requirements, and operational controls. The policy also establishes the Long-Term Benefit Trust as a novel governance mechanism for overseeing transformative AI development.

Key Points

- •ASL framework defines escalating safety requirements based on a model's potential for catastrophic harm, including bioweapon creation or autonomous harmful behavior.

- •Current Claude models are classified ASL-2; ASL-3 and above require adversarial red-teaming, enhanced security measures, and potentially interpretability-based assurance.

- •The policy creates a conditional commitment: Anthropic will pause deployment or development if adequate safety measures cannot be demonstrated for a given ASL tier.

- •The Long-Term Benefit Trust is introduced as a governance body intended to ensure Anthropic's mission remains aligned with broad human benefit over time.

- •The RSP is explicitly modeled after biosafety level (BSL) standards, applying a similar tiered risk-management approach to frontier AI systems.

Cited by 1 page

| Page | Type | Quality |

|---|---|---|

| Anthropic Long-Term Benefit Trust | Organization | 70.0 |

Cached Content Preview

# Anthropic's Responsible Scaling Policy & Long-Term Benefit Trust

By Zac Hatfield-Dodds

Published: 2023-09-19

*I'm delighted that Anthropic has formally committed to [our responsible scaling policy](https://www-files.anthropic.com/production/files/responsible-scaling-policy-1.0.pdf).

We're also sharing more detail about the [Long-Term Benefit Trust](https://www.anthropic.com/index/the-long-term-benefit-trust), which is our attempt to fine-tune our corporate governance to address the unique challenges and long-term opportunities of transformative AI.*

----------

Today, we’re [publishing our Responsible Scaling Policy (RSP)](https://www-files.anthropic.com/production/files/responsible-scaling-policy-1.0.pdf) – a series of technical and organizational protocols that we’re adopting to help us manage the risks of developing increasingly capable AI systems.

As AI models become more capable, we believe that they will create major economic and social value, but will also present increasingly severe risks. Our RSP focuses on catastrophic risks – those where an AI model directly causes large scale devastation. Such risks can come from deliberate misuse of models (for example use by terrorists or state actors to create bioweapons) or from models that cause destruction by acting autonomously in ways contrary to the intent of their designers.

Our RSP defines a framework called AI Safety Levels (ASL) for addressing catastrophic risks, modeled loosely after the US government’s biosafety level (BSL) standards for handling of dangerous biological materials. The basic idea is to require safety, security, and operational standards appropriate to a model’s potential for catastrophic risk, with higher ASL levels requiring increasingly strict demonstrations of safety.

A very abbreviated summary of the ASL system is as follows:

- ASL-1 refers to systems which pose no meaningful catastrophic risk, for example a 2018 LLM or an AI system that only plays chess.

- ASL-2 refers to systems that show early signs of dangerous capabilities – for example ability to give instructions on how to build bioweapons – but where the information is not yet useful due to insufficient reliability or not providing information that e.g. a search engine couldn’t. Current LLMs, including Claude, appear to be ASL-2.

- ASL-3 refers to systems that substantially increase the risk of catastrophic misuse compared to non-AI baselines (e.g. search engines or textbooks) OR that show low-level autonomous capabilities.

- ASL-4 and higher (ASL-5+) is not yet defined as it is too far from present systems, but will likely involve qualitative escalations in catastrophic misuse potential and autonomy.

The definition, criteria, and safety measures for each ASL level are described in detail in the main document, but at a high level, ASL-2 measures represent our current safety and security standards and overlap signific

... (truncated, 9 KB total)ae8c6faf4fc25cff | Stable ID: sid_8lJn1PNcVT