2023 AI Impacts survey

webCredibility Rating

Good quality. Reputable source with community review or editorial standards, but less rigorous than peer-reviewed venues.

Rating inherited from publication venue: AI Impacts

This is a landmark empirical survey of AI researcher opinion; frequently cited as evidence of expert concern about AI risk and as a reference point for AI timeline forecasting in safety discussions.

Metadata

Summary

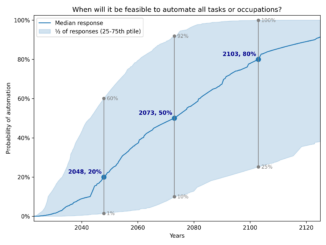

AI Impacts surveyed 2,778 AI researchers in 2023 about their expectations for AI progress, safety risks, and transformative milestones. The survey captures expert probability estimates for high-level machine intelligence, catastrophic risk, and the importance of AI safety research. It is one of the largest and most comprehensive surveys of AI researcher opinion on existential and transformative AI risk.

Key Points

- •Median respondent estimated a 5% chance of extremely bad outcomes (e.g., human extinction) from AI, with significant variance across researchers.

- •A majority of respondents believed AI safety research is important, and many supported slowing AI development under certain conditions.

- •Estimates for transformative AI (HLMI) timelines shifted notably shorter compared to prior AI Impacts surveys from 2016 and 2022.

- •The survey included six questions specifically targeting AI safety experts, providing a dedicated subsample for safety-focused analysis.

- •Results highlight deep uncertainty and disagreement among experts on both timelines and the magnitude of AI-related risks.

Cited by 1 page

| Page | Type | Quality |

|---|---|---|

| AGI Timeline | Concept | 59.0 |

Cached Content Preview

It seems we can’t find what you’re looking for. You might be looking for a page that has been made private.

#### Search

Search for:

#### Latest Articles

- [](https://aiimpacts.org/how-should-we-analyse-survey-forecasts-of-ai-timelines/ "How should we analyse survey forecasts of AI timelines?")

### [How should we analyse survey forecasts of AI timelines?](https://aiimpacts.org/how-should-we-analyse-survey-forecasts-of-ai-timelines/ "How should we analyse survey forecasts of AI timelines?")

Tom Adamczewski, 2024 The Expert Survey on Progress in AI (ESPAI) is a large survey of AI researchers about the future of AI, conducted in 2016, 2022, and 2023. One main focus of the survey [How should we analyse survey forecasts of AI timelines?](https://aiimpacts.org/how-should-we-analyse-survey-forecasts-of-ai-timelines/ "How should we analyse survey forecasts of AI timelines?")

- [](https://aiimpacts.org/the-purpose-of-philosophical-ai-will-be-to-orient-ourselves-in-thinking/ "The purpose of philosophical AI will be: To orient ourselves in thinking")

[The purpose of philosophical AI will be: To orient ourselves in thinking](https://aiimpacts.org/the-purpose-of-philosophical-ai-will-be-to-orient-ourselves-in-thinking/ "The purpose of philosophical AI will be: To orient ourselves in thinking")

- [](https://aiimpacts.org/machines-and-moral-judgment/ "Machines and Moral Judgment")

[Machines and Moral Judgment](https://aiimpacts.org/machines-and-moral-judgment/ "Machines and Moral Judgment")

- [](https://aiimpacts.org/towards-the-operationalization-of-philosophy-wisdom/ "Towards the Operationalization of Philosophy & Wisdom")

[Towards the Operationalization of Philosophy & Wisdom](https://aiimpacts.org/towards-the-operationalization-of-philosophy-wisdom/ "Towards the Operationalization of Philosophy & Wisdom")

- [](https://aiimpacts.org/some-preliminary-notes-on-the-promise-of-a-wisdom-explosion/ "Some Preliminary Notes on the Promise of a Wisdom Explosion")

[Some Preliminary Notes on the Promise of a Wisdom Explosion](https://aiimpacts.org/some-preliminary-notes-on-the-promise-of-a-wisdom-explosion/ "Some Preliminary Notes on the Promise of a Wisdom Explosion")

#### Popular Articles

- [](https://aiimpacts.org/ai-timeline-surveys/ "AI Timeline Surveys")

### [AI Timeline Surveys](https://aiimpacts.org/ai-timeline-surveys/ "AI Timeline Surveys")

This page is out-of-date. Visit the

... (truncated, 5 KB total)efb578b3189ba3cb | Stable ID: sid_x5GAVo7YAU